Jumpin' on the Docker train (better late than never...)

I've had no reason to learn Docker so far, but it's been around for years, I've heard others talk about it, and now I feel like I should already know it. Well, we're using it where I work to varying degrees and I think I may be delving into some of it soon, so...... time to get the basics.

If you're in a technology field, it's easy to develop the feeling that you need to know every concept out there. As if we aren't being paid to learn new things as business needs arise, but to somehow know everything ahead of time.

We make it worse by (oh so very wrongly) assuming that if someone does or knows one thing well, then they must do or know every thing well, which of course is never true. It's why George Foreman can sell grills, Jimmy Fallon sports merchandise, and Arnold Schwarzenegger became governor. We know all this on some level, even if we frequently forget it.

For me, today, that technology is Docker. I've had no reason to learn it so far, but it's been around for years, I've heard others talk about it, and now I feel like I should already know it. Well, we're using it where I work to varying degrees and I think I may be delving into some of it soon, so time to get the basics at least...

Now We're Cooking (a distasteful analogy)

If you're learning it for the first time too, here's how I'm imagining it so far, after a few articles and a handful of videos. If you're a chef and a programmer... I'm sorry.

Imagine you're a chef, in charge of recreating old dishes, improving on them, inventing new ones... but there's nothing new under the sun, and ultimately they're going to be built upon some basics, right? You have base ingredients like flour and eggs and sugar. You have a kitchen with a base set of utensils and gadgets - it's not a mechanics garage, IT lab, or a physician's office (unless your cooking is really bad).

Traditional servers

You're initially given a style of food prep to recreate, some way it was served centuries ago, and lo' and behold you find a place where they've been serving it that way all along. They're making it all work successfully, but there are no recipes or blueprints to follow. It's a piecemeal thing that's grown and evolved over time.

How do you recreate their success? Is it just the ingredients they use? Is it the machinery? The exact oven or utensils? Do the wood-paneled walls or coffered ceiling somehow play into it? The whimsy art for sale in the front window? The bathrooms? The exact combination of employees, in the same ratio of race, gender, and age, and personal knowledge?

Your only choice is to recreate everything, exactly as it is in the other kitchen, and good freaking luck with that. You'll have to build a place with the same layout, the same tools, the same plumbing, clones of the employees (this is getting shady). Most of it may be unnecessary, but how do you know for sure? Everything's tightly coupled to everything else. The safest route is all or nothing.

Before virtualization, the only way to guarantee that a complicated piece of software would run correctly on a second machine was to configure it to have exactly the same setup as the first machine it was on. But what if that first machine was a development machine, or had been around for awhile? It might have a hundred updates, or a dozen conflicting pieces of software. Maybe the only reason the application worked was because one of the 12 frameworks the developer was using happened to install something she ended up using in the app, and she wasn't even aware of it!

Hardware virtualization (virtual machines)

Realizing you can prepare new foods in the same style by using the same hardware - log cabin, brick oven, cast iron pots - you strike a deal with the owner to renovate the unused space in the back room. Now you can create any food you want... colonial era tiramisu! You'll need employees with similar skills to create similar foods, or you could hire employees with different skills to produce different foods, but the underlying hardware is the same. This analogy is starting to crack at the seams.

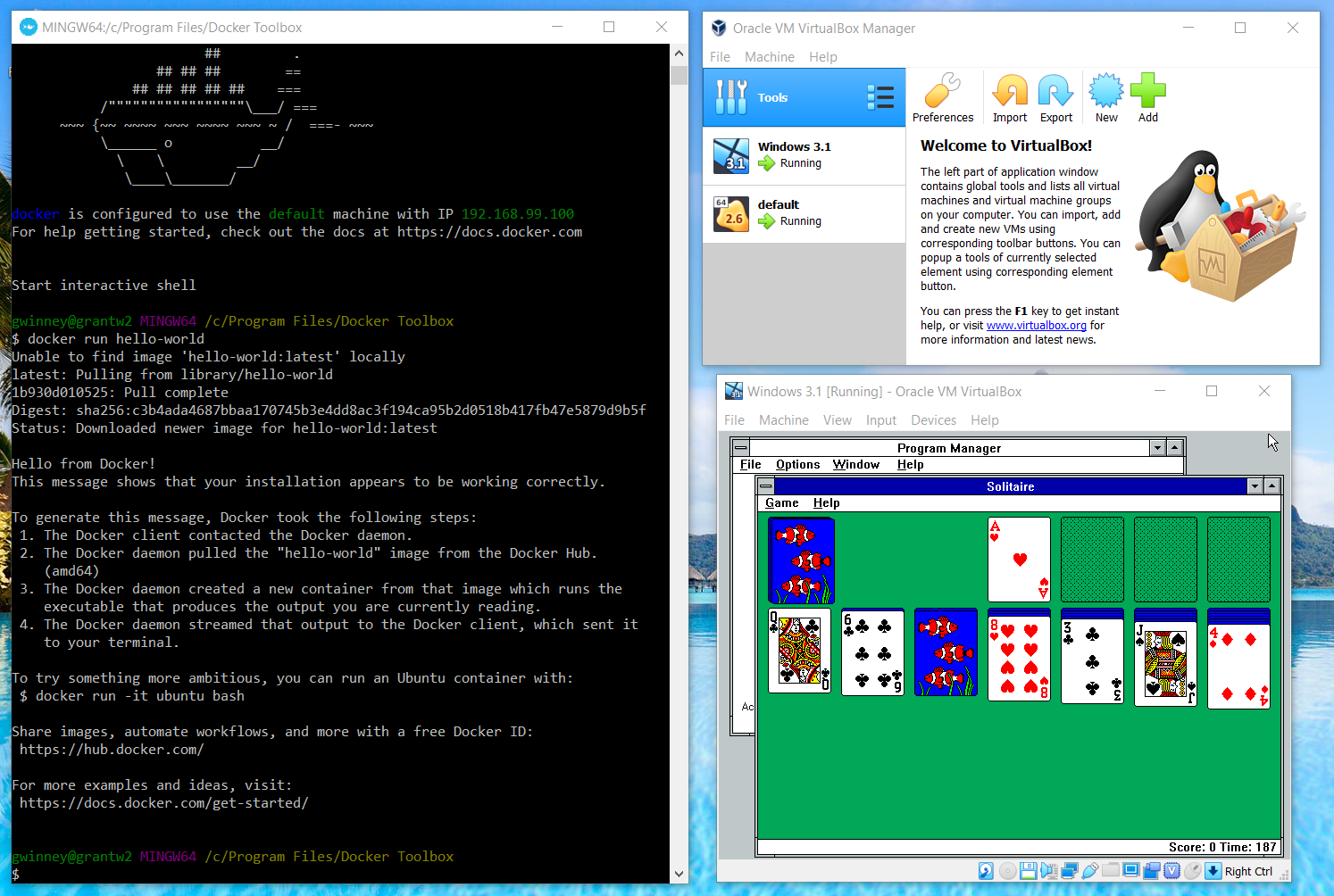

One way to save time and resources is to use hardware virtualization technology, such that you run an operating system inside another operating system. You've got that nice powerful machine with gigabytes of RAM and terabytes of hard disk space, but you're not using it all. You can setup a virtual machine, running Windows 3.1 or Windows XP for example - or something far more useful. 😉

OS-level virtualization

Wait, you're not interested in making most types of foods - just desserts of yore, like great-great-great-great-grandma's apple pie. You don't just want to reuse the existing building and ovens and sinks, you want to reuse the people and skills too since they happen to be proficient at making desserts. It's just as well - the back room was full of rats, completely unusable.

So you share all the existing hardware and software (that's an ugly way to refer to that portly chef), and simply install more stations nearby in the same big room. You get a portion of the sink and oven and employee's time (all segregated though, so your desserts don't blend together). As you think of more desserts to make, you just repeat the process of adding a new station until the building runs out of room and the plumbing is clogged up with cookie dough and cake batter. Are you actually still reading this?

Docker and other OS-level virtualization techniques bring us similar repeatability and stability as full virtual machines, but they use less resources because not only are we reusing the hardware... we're reusing a portion of the software too.

Docker

Even better, if you screw up a dessert or set a station on fire, it has no effect on the other stations. You roll back to a time before everything reeked of burnt tofu (what kind of dessert are you making??), and you're good to go. If you're improving a recipe and decide after a few iterations that it's all awful oh my gosh why did I ever get into cooking I should've listened to mom and become a doctor, then you can just roll back to a week ago when you thought better of yourself.

I don't know if all such virtualization works this way, but Docker is built on layers. So if someone else created an "image" you want to use, you can spin an instance of it up and build on top of it. Your app runs in a "container" that's different than the original image, and if you like what you've got you can save a snapshot of it to create a new image layered on top of the original. You can create all kinds of snapshots over time, rollback to a specific one, build 5 projects from the same image that fork off in their own directions, whatever. Sounds a lot like source control via git et al. 🤔

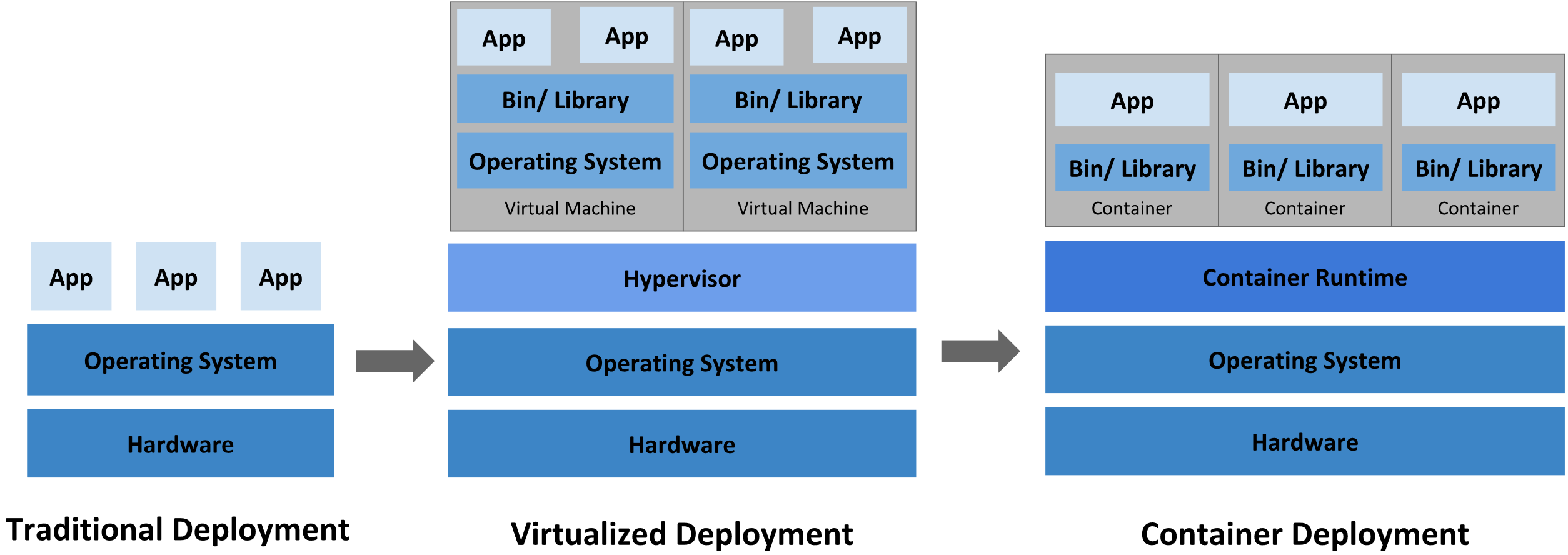

A Diagram (not mine)

Here's a nice diagram I'm not ashamed to say I swiped from What is Kubernetes.

- Traditionally, every deployment required its own hardware, OS and apps.

- With hardware virtualization, a deployment requires only its own OS and apps.

- With OS-level virtualization, every deployment requires only its own lightweight space for an app to run in, called a container, so it can save data and such.

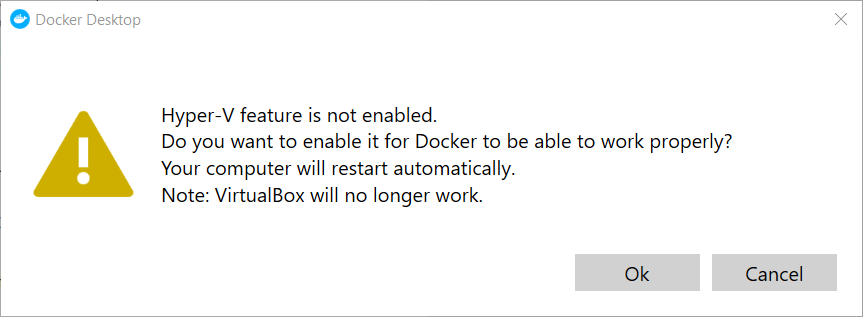

Hyper-V and Docker and VirtualBox, oh my

So this was a fun discovery. If you have Windows 10 and still want to use VirtualBox or VMware for virtual machines, don't just go for the alluring Docker for Windows installation. That path is a trail of tears and sadness.

- Docker for Windows requires Hyper-V to work.

- VirtualBox and VMware do not work with Hyper-V enabled.

So unfortunately, neither VirtualBox nor VMware plays well with Docker installed and Hyper-V enabled, but at least VMware should have this fixed next year sometime. For now, you may want to skip Docker for Windows, disable Hyper-V if you have it enabled, and install Docker Toolbox instead. Run the "hello world" docker app, and if all goes well you can use VirtualBox and Docker.

Learning Material (also not mine.. duh)

I found the following article helpful, but mostly stuck to videos:

I found the above article helpful, but as stated... mostly stuck to videos: 🙃

Comments / Reactions